You have 1 article left to read this month before you need to register a free LeadDev.com account.

When we talk about Agile software development, it’s impossible not to mention tests.

The ‘fail fast’ principle also entails test-driven development and continuous delivery. We no longer wait to have the application in a test environment and someone responsible for clicking in a bunch of buttons until they figure out that software doesn’t work as expected. We now have pipelines that allow us to run automated tests before our application gets to production, lessening the need for manual tests and making developers equally responsible for the quality of software they’re developing.

However, there are still lots of questions about how and when to test. With so many different types of tests, which ones should we have in our system? How many tests are enough? What exactly should we test? If metrics tell us our code is 100% covered, does it mean we don’t have any bugs?

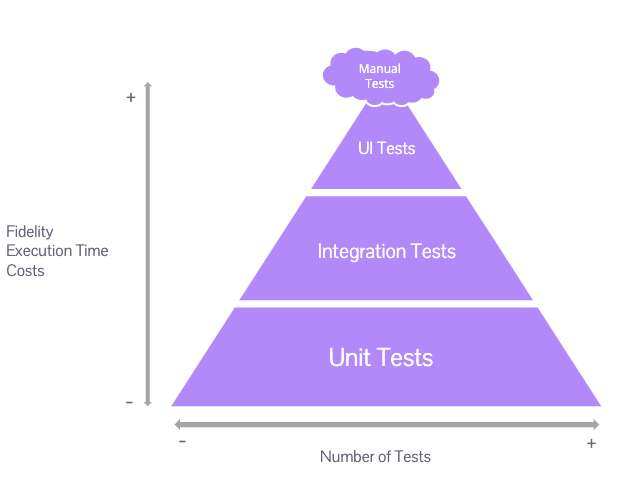

Trying to answer these questions, Mike Cohn published a book in 2010 called Succeeding with Agile, in which he presented the concept of ‘the test pyramid’. The pyramid is a guide on how to write assertive tests, in the right places and with the right context. Since then, the pyramid has been praised and repudiated – and other pyramids have been created, as well as some anti-patterns. It is a great starting point for us to understand how to balance the testing strategy in our systems.

We want to make sure that everyone involved in the software development process is able to understand this subject – not just devs and quality analysts. Therefore, in the same way that we have the Agile Manifesto, we have the Testing Manifesto, which shows us important things to keep in mind when we talk about quality.

Let’s prevent the creation of bugs instead of finding them. Let’s write tests while coding and not just when the system is ‘ready’. Let’s understand the details of what we are developing instead of checking functionality. Let’s build the best system that we can and not find ways to break it. And finally, the quality of our software depends on the team and not just on one person.

Now, before we get into the world of pyramids, we need to understand which are the types of tests that we mostly hear about.

Types of tests

Unit tests

We can consider a unit as the smallest part of our code, or even the smallest testable part of our application. Depending on the language we use, it can be a function, a method, a subroutine, or a property. We can find different definitions for unit tests, but it’s important to remember that they test small and independent parts of code and that they verify that these parts do what they are expected to do. If our unit needs to communicate with another part of the code, we can use mocks and stubs. We can write unit tests for any parts of the system, such as repository classes, controllers, and domain. It’s ideal to have a test class for every production code class.

Integration tests

Do you remember when you would do group projects at school work and have a different person responsible for each part of the work? Then, on the day of delivering it, you would put all the parts together and nothing would make sense. Yes? That’s why, in the software world, we need integration testing. We already know that the small parts of our code are working as expected, one independent from another. We need to make sure that, when they communicate with each other, things are going to work as intended.

Contract testing

When we leave the world of monoliths and start talking about microservices and communication with external APIs, it’s important to talk about another type of test: contract testing. This type of test will ensure there is no break of contracts between provider and client. We need to make sure there weren’t any kind of changes in the type of structure or type of data provided.

Different from integration tests, contract tests are focused on comparing types of data exchanged between client and provider with a contract file. If the test is broken, we know there is a change in the provider, or that modifications made by the client now expect something different.

Contract tests should be in a separate suite as we can deal with API instability and, if they are part of the same suite, we might be making excessive requests.

UI tests

This type of test makes sure the user interface (UI) of your application works correctly. We ensure that user input triggers the right actions, that data is presented to the user, and that UI state changes as expected. Sometimes, it is said that UI tests and end-to-end tests are the same thing.

Yes, testing your application end-to-end often means driving your tests through the user interface. The inverse, however, is not true. UI tests can verify that when clicking on a button the system returns the correct behavior to the user, but it does not care where exactly the button is.

End-to-end tests

This type of test gives us more confidence in ensuring that the software works as expected. There are several tools that allow us to automate our tests, guiding our browser against our services, executing clicks, inserting data, and verifying the state of the user interface. Unlike UI tests, end-to-end considers the user journey. So, it needs to be connected directly to the system logic.

Different contexts, different pyramids

Mike Cohen’s pyramid looks at how to think about our test strategy, intending to have a bigger quantity of tests that are fast to develop, and that also give us quick feedback for less cost. It also mentions having fewer tests that take more time to be developed and that have a high cost.

When thinking about test pyramids, we automatically imagine a complex system with a backend and frontend. But we know that this is not always the reality, and it is important to understand that it is possible to adapt the pyramids to different contexts.

How would we think of a test pyramid for a legacy system that requires changes in functionalities that are in production and also the creation of new features? To illustrate this, we will talk about two contexts: a backend-only project and a frontend-only project.

When we have a project that only has a backend, there is the possibility of a pyramid similar to the traditional test pyramid – with unit tests, integration tests, contract tests, and even functional tests to evaluate an end-to-end journey, without the need to test the frontend. Within these tests, we can also have safety and performance tests and create a pipeline that automates everything without having to rely on manual tests.

However, in a project with only a frontend, a traditional test pyramid may not make sense. Unit tests will not be the majority. It is necessary to guarantee the user’s journey, and also accessibility using end-to-end and integration tests to make sure that the points important to the business are covered. For this scenario, the ‘test trophy’ concept might make more sense.

“The Testing Trophy” ?

A general guide for the **return on investment** ? of the different forms of testing with regards to testing JavaScript applications.

– End to end w/ @Cypress_io ⚫️

– Integration & Unit w/ @fbjest ?

– Static w/ @flowtype ? and @geteslint ⬣ pic.twitter.com/kPBC6yVxSA— Kent C. Dodds (@kentcdodds) February 6, 2018

The main point is that we need to understand the context we are in to know if the test pyramid can apply to it or not. Also, we should keep in mind that the test pyramid is a concept to help us create strategies that will help us with the delivery of the best product, but nothing is written in stone – everything should be tried out and improved over time.

The extended test pyramid

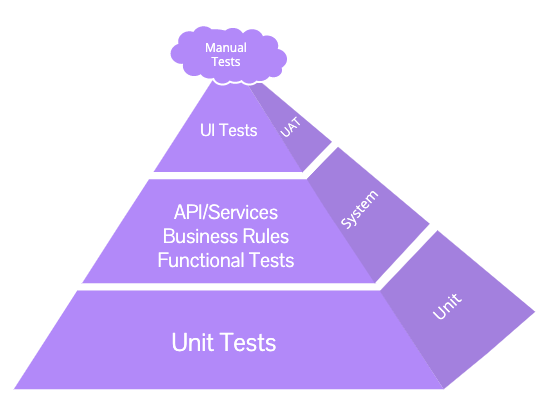

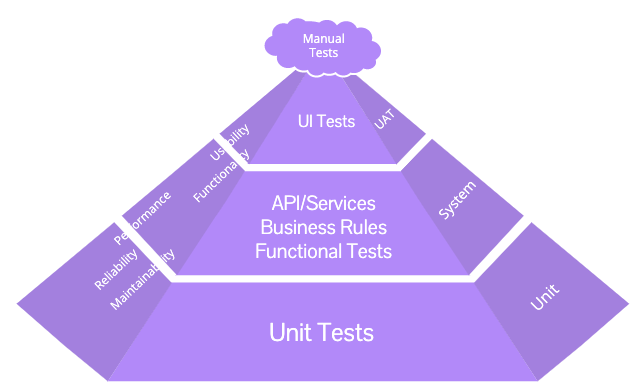

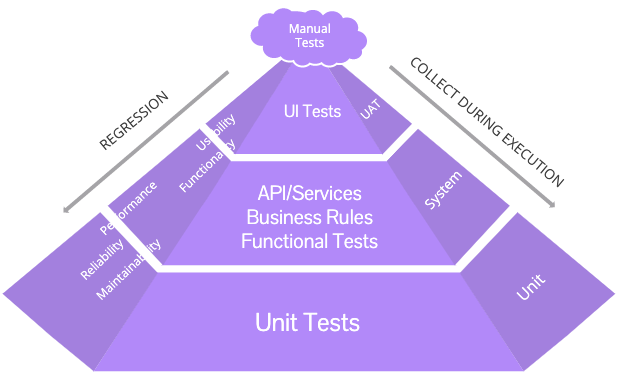

Besides looking at the pyramid from different contexts, we can also look at it from different angles. For this, we can talk about the expanded test pyramid. This concept was introduced in More Agile Testing, written by Janet Gregory and Lisa Crispin. It brings a more complete view of the pyramid, expanding it in different dimensions. It shows us a comprehensive view of possibilities and needs that must be taken into account when building a quality strategy for software development.

Based on an image in More Agile Testing

In the center, the traditional view tells us what each layer means and what it is designed to test. This helps in creating a test strategy appropriate to the software.

Based on an image in More Agile Testing

On the right, we see the dimension that encompasses the tools that we can use to perform these tests, and each tool is chosen for a specific type of test. For example, in the functional and business rules test layer, we are analyzing the system tests and making sure that the fundamental functionality of each of the elements of the architecture works individually and together.

In the left dimension, we have the CRFs (cross-functional requirements), which give us a view of what needs to be defined and evaluated as criteria that directly interferes with the end-user, and that can be thought of in several layers. While functional requirements tell us what to do, cross-functional requirements show us how it will be done. We think of usability, accessibility, performance, security, and many other requirements that may not be related to code development specifically, but are equally important.

Based on an image in More Agile Testing

Visually, it is very difficult to represent the fourth side of the pyramid, so the regression tests are represented in lines that run along the third side, indicating that the regression can be in any attribute of the system that was considered as part of the test.

After each type of test is assigned to the correct test level, it is possible to understand how many tests can be developed at each layer, which allows working on quality metrics at various levels and makes our view of the pyramid much more complete.

What not to do: anti-patterns

When trying to implement an efficient test strategy, we can fall into some traps. There is, for example, the ice cream cone anti-pattern. This happens when we don’t have many unit or integration tests, but we have too many end-to-end ones (those that happen at the UI level), and even more manual tests. These tests tend to fail at any UI change, and teams end up spending a good amount of time trying to fix or update these UI tests and repeating regression tests manually.

How can we avoid this happening? Investing more in automating tests at a lower level.

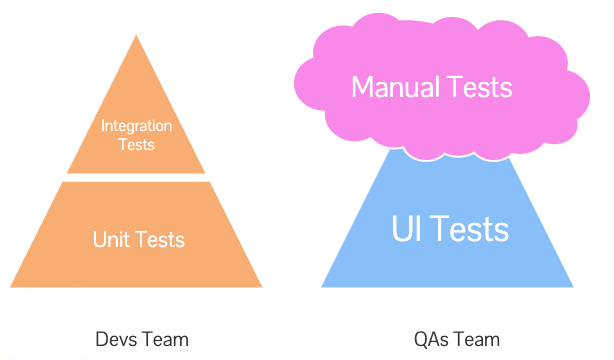

However, some situations can lead to another anti-pattern. Let’s say the project you work at has separate teams writing tests at different levels. Your team is responsible for developing code and writing unit and integration tests; another team is responsible for writing end-to-end tests; yet another team is responsible for manual tests. If these teams are not in synchrony, we can end up in the cupcake anti-pattern, presented by Fábio Pereira. And, well, that’s not very Agile.

Lack of communication between different teams can cause duplication in test cases and, even worse, some scenarios end up not being tested at all. This anti-pattern is also known as the dual test pyramid.

Test coverage

How can we know if we’re testing everything we should test, and how much of our code is being tested? That’s where test coverage comes into play. The idea is to help us monitor the number of tests and support ourselves in creating tests that cover areas that were missed or have not been previously validated.

Several tools allow us to monitor these metrics. However, it’s important to keep in mind that having 100% of test coverage doesn’t say much about the quality of our tests. Lots of teams work hard to reach a specific coverage. Yes, having a low number is probably a sign that something is wrong. But, having a high number is not a guarantee that we have high quality in our tests. We can easily learn to make tests that please metrics, but that doesn’t help validate our code. Remember that the important part is what you’re testing and how you’re testing it, not just the number of tests.

Continuous delivery

How can we get fast feedback about how changes we make affect software? We need to use an approach that allows quality to be incorporated into the software.

Continuous delivery is an essential part of this process. When we have automated tests and we are working with continuous integration, we can create a deployment pipeline. In this pipeline, each change that we make creates a package that can be deployed to any environment, runs unit tests, and provides instant feedback for developers. If unit tests pass, the pipeline goes to the second phase, where automated acceptance tests are run, and so on. After all the tests are run in different steps, and all of them pass, we have a package available for deployment in other environments, in which exploratory and usability testing can be made, for example. The pipeline should adapt to each project according to its needs. The main point is that we can use a deploy pipeline to have fast feedback. Later on, if we find defects, it means we should improve our pipeline by adding or changing our automated tests.

How to balance the test pyramid?

Now that we know the main types of tests, the ideal pyramid, and all the patterns we should not follow, do we know exactly how to implement our test strategy?

No! There is no magic formula. The test pyramid is a simplistic representation of a strategy that leaves out several types of tests that may or may not apply to our project.

The goal is to stimulate reflection on teams so that we can understand what can be used and how we can improve our strategy. Focus on some Agile principles like testing early to get fast feedback, preventing bugs instead of finding bugs, and remembering that the whole team is responsible for quality. This way, you’ll have visibility into all possible improvements that need to be made to processes or techniques, and the quality of your product will gradually evolve.